Instantly know what's human and AI on Twitter, LinkedIn, Substack and more. Get our new chrome extension.

AI Code Detector: How to Check if Code Was Written by ChatGPT, Copilot, or Claude

GitHub, Copilot, ChatGPT, and Claude are AI coding assistants. They let software developers produce code at a faster pace. This has revolutionized developer productivity. That being said, this increased speed comes with hidden risks. Software supply chain security, copyright issues, and hiring integrity are all affected.

Stanford’s MOSS can generally determine if a script was AI-generated, but a savvy developer can adjust their order of methods and change variables to go undetected. It is also only available for non-commercial use. Actually determining if code is AI-generated requires specialized infrastructure. Enterprise platforms like Pangram are now stepping in to provide dedicated AI code detection.

If you are asking, “can AI generated code be detected?”, the answer is “Yes.” Detecting AI code is fundamentally different - and harder - than detecting AI text. This guide explores the following points:

- The patterns of machine-generated code.

- The enterprise-use cases for detection.

- How to implement a “Human-in-the-Loop” governance strategy for detecting AI-generated code.

Pangram AI Detection for Developers

Pangram AI Detection for DevelopersWhy is Detecting AI Code So Difficult? (The "Degrees of Freedom" Problem)

AI-generated code is more difficult to detect than human writing because programming languages have fewer "degrees of freedom.” There are fewer stylistic and structural choices available to a developer. This is especially true in comparison to the many stylistic/structural choices available to an author.

Languages like C and Assembly have very strict syntax requirements. If a human is trying to solve a problem, they might develop the most efficient function for this problem. An AI might also develop this same code, because it is the most efficient function. Both a human and an AI may produce mathematically identical code.

Standard boilerplate code does not contain much of a statistical signal. An AI detector will be unable to confidently label this type of code as AI-generated/human-written. This is also true of simple configuration files.

Use Case 1: Software Security and IP Governance

CTOs and Legal Ops teams utilize AI code detectors to verify the origins of their codebase. They do this to ensure that their developers are not publishing un-copyrightable AI IP. Or hallucinated, vulnerable logic.

In the US, fully AI-generated content cannot be copyrighted. If a startup’s core product was entirely generated by Copilot without human oversight, this startup may not be able to copyright this product.

An AI code detector is a vital first step in security workflows. If a piece of code is flagged as 100% AI-generated, it needs a rigorous manual security review. This review must occur before the code is merged.

Use Case 2: Technical Hiring and Developer Assessments

Hiring managers use an AI code detector on technical take-home assignments. They use an AI code detector on these assignments to ensure that candidates understand the logic they are submitting. Hiring managers don’t want candidates that copy-and-paste ChatGPT output. They want candidates who understand the logic behind this output.

A developer who relies entirely on an LLM to pass a coding test will likely fail when asked to debug complex, undocumented legacy systems. Code is complex. A developer unable to navigate these complexities without ChatGPT may be unable to perform their role.

Rather than blanket-banning AI, recruiters use detection tools to prompt interview questions. Here is an example: "I see this function relies heavily on AI assistance. Can you walk me through why the model chose this specific loop structure?"

Patterns to Look For: How to Manually Spot AI Code

Software is required for high-volume AI detection accuracy. But, for smaller volumes, manual reviewers can detect AI code by looking for:

- Hyper-specific commenting styles.

- Over-documentation.

- Extreme internal similarity.

AI models, like Claude/ChatGPT, are trained to be helpful. This results in them inserting exhaustive, unnatural comments for each line of code. Human developers rarely do this, making comments of this sort an AI tell.

In academic or hiring settings, AI code often looks identical across multiple submissions. MOSS can highlight this similarity. This lets MOSS act as a secondary signal of AI generation. Other tools can do this, too.

How Pangram's AI Code Detector Works

To check if code is AI-generated, Pangram uses deep learning. Deep learning is used to identify the statistical fingerprint of AI models.

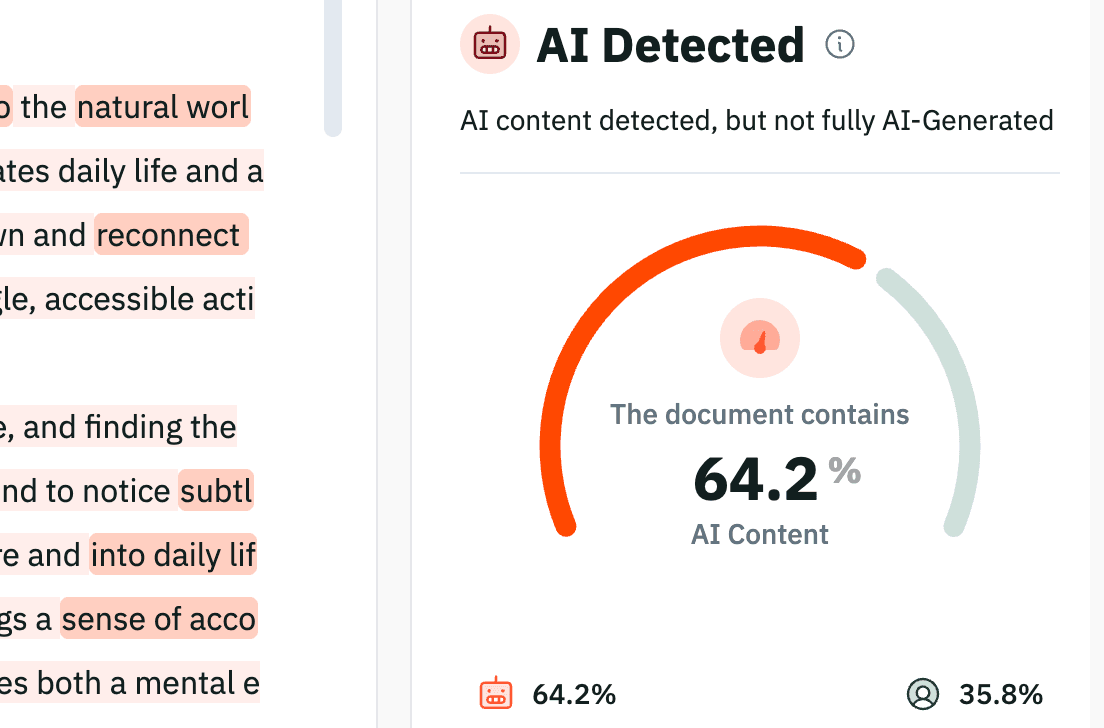

Pangram has a 96.2% accurate rate. It also has a near-zero false positive rate of 0.3%. This is largely because of deep learning.

Pangram's Accuracy on Code Over 40 Lines Long

Pangram's Accuracy on Code Over 40 Lines LongUnlike other AI code detectors, Pangram is intentionally conservative. It is designed to miss some AI boilerplate - Pangram has an 8.5% false negative rate on long snippets of code. This is to ensure that it never falsely accuses a human developer.

Engineering and recruiting teams can integrate Pangram seamlessly into their enterprise workflow. They can do this via Pangram’s AI code detection Python SDK. Or, they can use Pangram’s API. Both of these options allow for automated AI code checking within existing Git or ATS workflows.

Verifying Code Integrity

AI coding assistants are powerful tools that accelerate software development of all sorts. But they cannot be blindly trusted to write secure, proprietary infrastructure.

By integrating an accurate AI code detector into their workflow, engineering and recruiting teams can:

- Secure their software supply chain.

- Protect their IP.

- Ensure they are hiring top-tier human talent.

Verify the provenance and originality of your codebase with the industry's most accurate AI detection platform.

Alex Roitman is Head of Growth at Pangram Labs, an AI content detection company. His work focuses on how AI-generated text is reshaping writing, education, and trust in the open web.

Related reading

How well does Pangram work on AI code?

Yes, AI detection can be accurate

Is Google Going to Penalize AI-Generated Content in 2026?

Did AI Write This? 4 Ways to Check if Text Was Generated

Academic Integrity Isn’t Just About Catching Cheaters. It’s About Teaching Students to Own Their Mistakes.

Do College Admissions Offices Check for AI?

to our updates