Instantly know what's human and AI on Twitter, LinkedIn, Substack and more. Get our new chrome extension.

Attending law school means heavy reading and a great deal of writing. Using generative AI tools like ChatGPT is very tempting, especially since it can draft personal statements, case briefs, and research memos.

This guide covers how the policies of different law schools are evolving and why you must be extremely cautious about how AI is integrated into your legal education.

Do Law Schools Use AI Detectors on Admissions Essays?

The answer to this question is “Yes.” Many law school admissions offices, independent pre-law counselors, and law firms actively employ AI detection software to ensure that the personal statements and diversity essays that make up an application are the authentic work of a particular applicant.

Law schools want to admit individuals with unique perspectives and authentic voices, as well as the intellectual fortitude to succeed in their programs. An essay generated by ChatGPT is inherently generic and ultimately defeats the purpose of a personal statement.

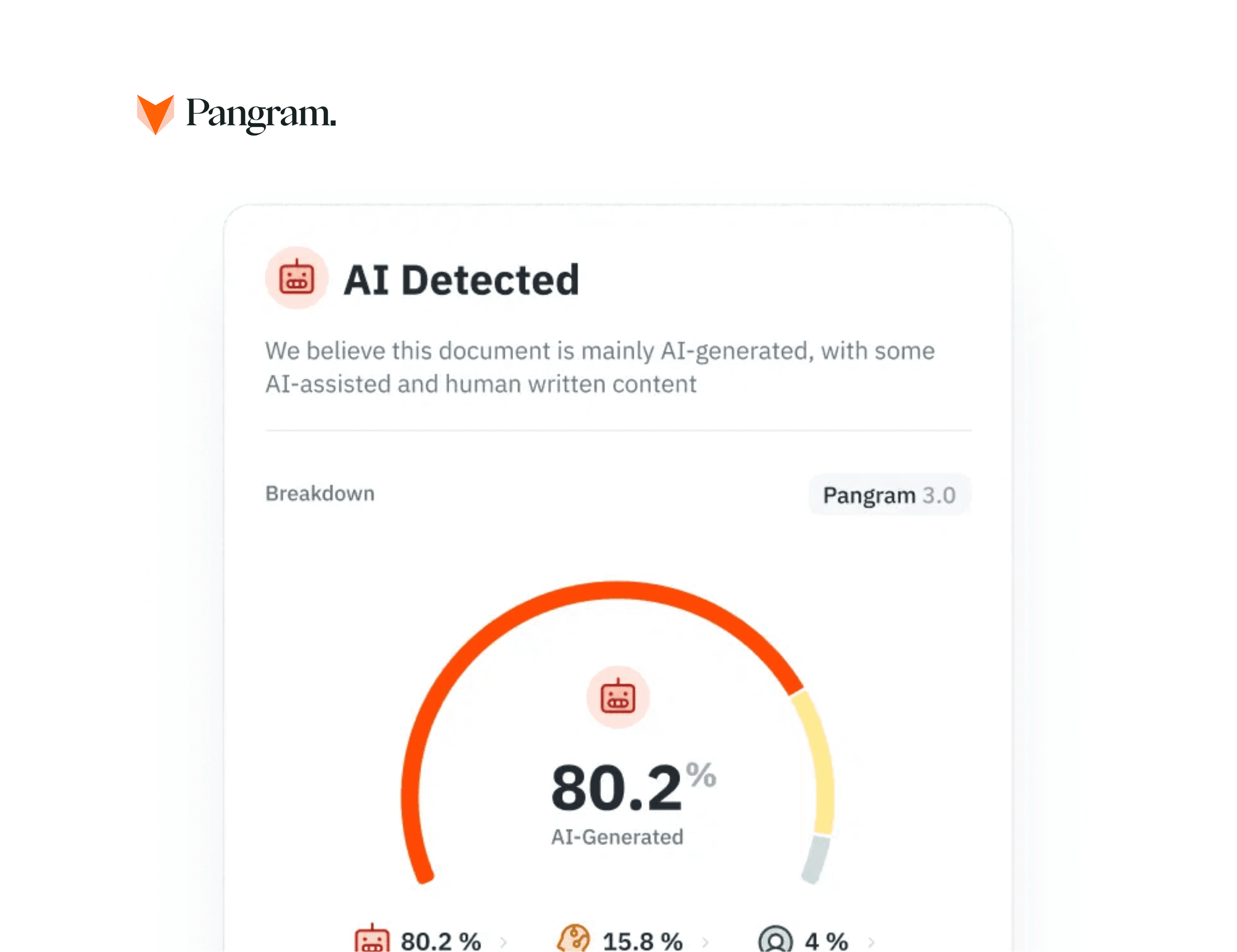

Law school admissions use AI detection at the very start of the process. Top-tier admissions consultants - Gradpilot, for example - integrate tools like Pangram directly into their review process. By doing so, they can identify an applicant’s overreliance on AI even before the essay is ever actually submitted to the LSAC.

Are AI Detectors Used on Law School Assignments?

Yes, academic integrity offices and law professors use enterprise-grade AI detectors integrated into their Learning Management Systems to check law school assignments of all kinds. Some of the assignments they check include legal writing assignments, research memos, and take-home exams.

In any kind of legal writing, accuracy and authenticity are table stakes. Using LLMs like ChatGPT is problematic because they are prone to fabricating case law, citations, and legal “facts.” Because of this, relying on AI for legal assignments doesn’t just risk a plagiarism charge; it risks severe academic penalties for fabricating legal precedent.

Professors use AI scores as a diagnostic tool. They combine the diagnosis from an AI detector for law schools with their own knowledge of a student’s prior writing abilities to investigate academic dishonesty. This mosaic approach, when applied, makes it easier to detect AI use.

The "Legalese" Problem: Will I Get a False Positive?

The standard, free AI detectors are known for falsely flagging highly formal, structured writing - legal memos, for example - as being AI-generated. But, this isn’t the case with the enterprise tools that universities use to check legal documents for AI; these tools are trained to avoid this flaw and deliver accurate AI assessments.

Perplexity is what standard, free AI detectors look for. Perplexity is a measure of statistical surprise, which means that basic AI detectors look for predictability and a high level of structure to detect AI usage.

Documents like the “Declaration of Independence” are highly structured and predictable, which is why many basic AI detectors flag them as AI. The tools that universities use, however, are far more accurate in their assessments.

If you are a student who writes their own work and uses AI only in the ways that are allowed, you have nothing to worry about. Premium university tools, such as Pangram, use Hard Negative Mining to distinguish formal legal syntax from AI-generated text. This results in a near-zero false positive rate of 1 in 10,000.

Navigating AI Policies in Legal Education

Every law school has its own unique AI policies. Some professors are strict: AI is completely banned. Other professors are far more open to the technology and incorporate AI into their curricula to teach future lawyers to use legal tech responsibly.

Right now, the legal profession is adopting generative AI for routine tasks. Some of these tasks include document review and contract analysis. For this reason, some professors permit AI for brainstorming or outlining - but only if you provide an attribution statement regarding your AI, in most cases.

If a particular law school or law professor’s syllabus does not explicitly state that the use of an LLM is allowed for an assignment, you should assume that the use of an LLM is strictly prohibited. If there is a law school ChatGPT policy or a series of guidelines regarding the use of LLMs, you should abide by the policy/guidelines.

Submitting any kind of AI-generated text without disclosure is widely considered academic fraud. You could face severe consequences - expulsion being one of the most notable - if you are found guilty of academic fraud.

Best Practices to Protect Your Academic Record

Law students and applicants should employ these best practices to protect their academic record: always draft original work, track your version history, hold onto your version history after sending in an assignment, and pre-check your documents to see if you have used AI for light grammar editing, brainstorming, or anything else.

In addition to employing those best practices, you should check your work for specific AI phrases. The words “delve,” “tapestry,” and “pivotal" are some of the most common AI phrases. Using these words too much could raise someone’s suspicion and make them think you used AI.

If you use any kind of AI tool for spellchecking, an advanced legal writing AI checker like Pangram can distinguish between “Lightly AI-Assisted” grammar checks and text that is “Fully AI-Generated.” Being able to distinguish these things can help prove that you wrote the core arguments within a particular document.

Law schools are actively monitoring AI use to protect the integrity of the legal profession and the people who serve in it.

While AI is a powerful tool for synthesis, it cannot replace the rigorous legal reasoning and critical thinking required to earn a JD.

Ensure your personal statement or legal memo is entirely your own. Verify your text’s authenticity before you submit.

Related reading

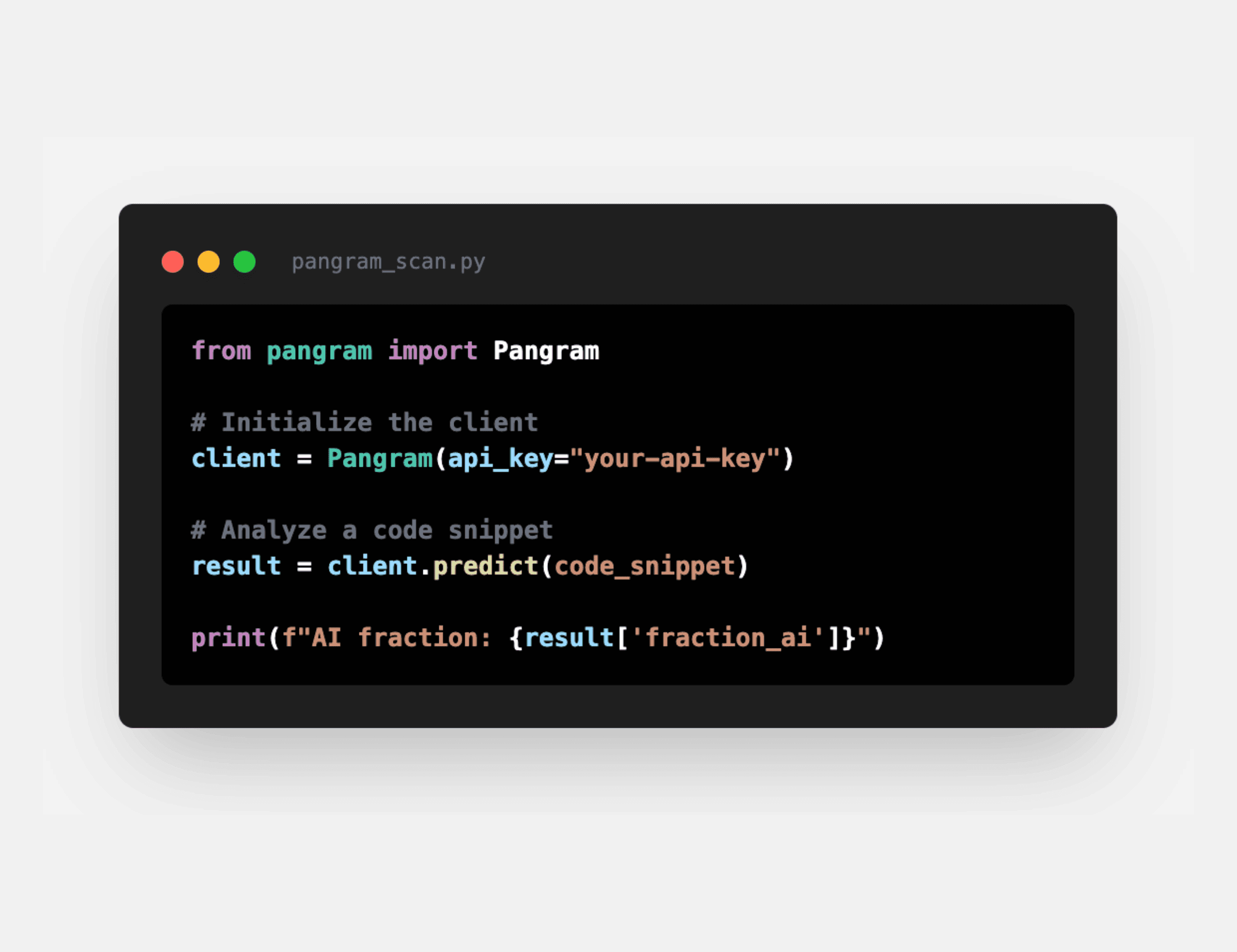

How well does Pangram work on AI code?

What does your AI detection score mean?

AI Code Detector: How to Check if Code Was Written by ChatGPT, Copilot, or Claude

Did AI Write This? 4 Ways to Check if Text Was Generated

A Mosaic Approach to Academic Integrity in the AI Era (With Chris Ostro)

Introducing Pangram’s Plagiarism Detection

to our updates