Instantly know what's human and AI on Twitter, LinkedIn, Substack and more. Get our new chrome extension.

Table of contents

- 🤗 Models & Datasets

- Source Code

- Why are we releasing an open version of Pangram?

- EditLens and AI Assistance Detection

- Datasets

- Models

- Evaluations

- In-domain test set

- Binary classification results

- Ternary classification results

- Held out domain (Enron emails)

- Binary classification results

- Ternary classification results

- Held out model (Llama 3.3 70B Instruct)

- Binary classification results

- Ternary classification results

- Third-party benchmarks

- Nonnative English (Liang et al., 2023)

- Human Detectors (Russell et al., 2024)

- RAID, random 10k sample (Dugan et al., 2024)

- Grammarly Dataset

- What should Open Pangram be used for?

- What should Open Pangram NOT be used for?

🤗 Models & Datasets

Source Code

We are proud and excited to share two versions of Pangram based on the EditLens technology we proposed in our 2026 ICLR paper. Available for non-commercial use under the CC BY-NC-SA 4.0 license, these two lightweight models can be run on a MacBook.

Why are we releasing an open version of Pangram?

We’ve always been invested in the state of AI detection, and we want to enable other researchers to make progress in this space. We’ve previously contributed to the community by publishing our EditLens paper that showcases novel ways to analyze and classify AI-generated content, conducting large-scale analysis on peer reviews and American newspapers, and offering API grants to researchers. By releasing EditLens model checkpoints, the training dataset, and source code, we hope that researchers can continue to build on top of our work.

EditLens and AI Assistance Detection

AI detection must evolve as generative AI use evolves. A recent study from OpenAI found that two-thirds of all writing-related requests to ChatGPT involve modifying user-provided text instead of than generating it from scratch. In light of this emerging paradigm of humans and AI jointly authoring text, we developed a novel detection framework that considers how much AI has contributed to a text. Pangram users may have noticed that our model returns results such as “Lightly AI-Assisted” or “Moderately AI-Assisted.” These classifications are made possible by the technology presented in our ICLR 2026 research paper, “EditLens: Quantifying the Extent of AI Editing in Text,” which introduces an AI detection model that returns a score from 0 to 1, with 0 indicating fully human-written text and 1 indicating fully AI-generated text. With the release of our dataset and source code, anyone can now train their own EditLens model.

Datasets

We are releasing the EditLens dataset of 60k training, 2.4k validation, and 6k test examples. Each split consists of fully human-written, fully AI-generated, and AI-edited texts from 4 domains. The AI-edited texts were generated by applying an editing prompt to a human-written source text from one of 5 domains: news (Narayan et al., 2018 and See et al., 2017), creative writing (Fan et al., 2018), Amazon reviews (Zhang et al., 2015), Google reviews (Li et al., 2022), and education-related web content (Lozhkov et al., 2024).

The models used to generate the AI-generated and AI-edited texts were OpenAI’s gpt-4.1-2025-04-14 , Anthropic’s claude-sonnet-4-20250514 , and Google’sgemini-2.5-flash.

The EditLens dataset also includes two out-of-domain evaluation splits: 6k examples from a held out source text domain (emails) and a version of the test split generated by Meta’s Llama-3.3-70B-Instruct-Turbo .

Additionally, we are releasing a dataset we collected of nearly 1.8k texts edited using Grammarly. This dataset consists of 9 different edits of 200 human-written source texts. Each of the edits (i.e. “Simplify this”) is a suggested edit from Grammarly’s native word processor. The 200 human-written source texts are sampled from one of the Persuade 2.0 (Crossley et al., 2024), ELLIPSE (Crossley et al., 2023), BAWE (Nesi et al., 2004), ICNALE (Ishikawa et al., 2007), CLASSE (Crossley et al., 2024), or PIILO (Holmes et al., 2023) datasets.

You can explore both datasets on HuggingFace.

Models

pangram/editlens_Llama-3.2-3B was finetuned using QLoRA with a maximum sequence length of 1024 tokens. The base model has 3B parameters.

pangram/editlens_roberta-large, a 355M parameter model, was finetuned with a maximum sequence length of 512 tokens.

Both models were trained for 1 epoch according to the method described in the EditLens paper. Additional hyperparameters and training code for both models can be found in the GitHub repository for EditLens. You can download the model checkpoints from HuggingFace.

Evaluations

For both binary and ternary classification, we find thresholds via calibration on the held out validation set.

For the binary evaluations, we find the threshold that maximizes the F1 score for distinguishing fully human-written from fully AI-generated texts. There is no AI-edited text in the binary evaluations.

For the ternary evaluations, we find two thresholds. First, we separate the evaluation data into three categories: human, AI, and AI-edited. Then we find a lower threshold that separates the human class from the union of [AI, AI-edited] data and an upper threshold that separates the AI class from the union of the [human, AI-edited] data. Both thresholds are found by maximizing the F1 score.

In-domain test set

Binary classification results

2,038 human and 2,046 AI texts

| Detector | Macro F1 | FPR | FNR |

|---|---|---|---|

| Pangram 3.2 (Current Production Model) | 1.000 | 0.000 | 0.000 |

| Pangram OSS: editlens_Llama-3.2-3B | 1.000 | 0.000 | 0.000 |

| Pangram OSS: editlens_roberta-large | 0.997 | 0.002 | 0.003 |

| Fast-DetectGPT | 0.895 | 0.121 | 0.088 |

| Binoculars | 0.886 | 0.128 | 0.101 |

Ternary classification results

2,038 human, 2,046 AI, and 2,031 AI-edited texts

| Detector | Accuracy | Macro F1 | Human F1 | AI F1 | AI Edited F1 |

|---|---|---|---|---|---|

| Pangram 3.2 (Current Production Model) | 0.920 | 0.920 | 0.926 | 0.957 | 0.876 |

| Pangram OSS: editlens_Llama-3.2-3B | 0.895 | 0.895 | 0.895 | 0.948 | 0.842 |

| Pangram OSS: editlens_roberta-large | 0.881 | 0.881 | 0.900 | 0.923 | 0.819 |

| Fast-DetectGPT | 0.585 | 0.545 | 0.246 | 0.831 | 0.558 |

| Binoculars | 0.569 | 0.523 | 0.213 | 0.811 | 0.545 |

Held out domain (Enron emails)

Binary classification results

1,992 human and 1,847 AI texts

| Detector | Macro F1 | FPR | FNR |

|---|---|---|---|

| Pangram 3.2 (Current Production Model) | 0.999 | 0.001 | 0.001 |

| Pangram OSS: editlens_Llama-3.2-3B | 0.998 | 0.001 | 0.004 |

| Pangram OSS: editlens_roberta-large | 0.966 | 0.001 | 0.068 |

| Fast-DetectGPT | 0.941 | 0.079 | 0.036 |

| Binoculars | 0.914 | 0.155 | 0.011 |

Ternary classification results

1,992 human, 1,847 AI, and 2,308 AI-edited texts

| Detector | Accuracy | Macro F1 | Human F1 | AI F1 | AI Edited F1 |

|---|---|---|---|---|---|

| Pangram 3.2 (Current Production Model) | 0.905 | 0.909 | 0.898 | 0.956 | 0.872 |

| Pangram OSS: editlens_Llama-3.2-3B | 0.863 | 0.868 | 0.855 | 0.936 | 0.812 |

| Pangram OSS: editlens_roberta-large | 0.695 | 0.673 | 0.847 | 0.515 | 0.657 |

| Fast-DetectGPT | 0.625 | 0.589 | 0.261 | 0.886 | 0.619 |

| Binoculars | 0.618 | 0.575 | 0.266 | 0.857 | 0.601 |

Held out model (Llama 3.3 70B Instruct)

Binary classification results

2,038 human and 2,038 AI texts

| Detector | Macro F1 | FPR | FNR |

|---|---|---|---|

| Pangram 3.2 (Current Production Model) | 1.000 | 0.000 | 0.000 |

| Pangram OSS: editlens_Llama-3.2-3B | 1.000 | 0.000 | 0.000 |

| Pangram OSS: editlens_roberta-large | 0.987 | 0.002 | 0.025 |

| Fast-DetectGPT | 0.939 | 0.121 | 0.000 |

| Binoculars | 0.936 | 0.128 | 0.000 |

Ternary classification results

2,038 human, 2,038 AI, and 1,881 AI-edited texts

| Detector | Accuracy | Macro F1 | Human F1 | AI F1 | AI Edited F1 |

|---|---|---|---|---|---|

| Pangram 3.2 (Current Production Model) | 0.952 | 0.951 | 0.946 | 0.985 | 0.923 |

| Pangram OSS: editlens_Llama-3.2-3B | 0.921 | 0.920 | 0.918 | 0.965 | 0.877 |

| Pangram OSS: editlens_roberta-large | 0.860 | 0.859 | 0.908 | 0.879 | 0.791 |

| Fast-DetectGPT | 0.562 | 0.506 | 0.262 | 0.817 | 0.440 |

| Binoculars | 0.540 | 0.478 | 0.227 | 0.796 | 0.411 |

Third-party benchmarks

Nonnative English (Liang et al., 2023)

91 human texts

| Detector | FPR |

|---|---|

| Pangram 3.2 (Current Production Model) | 0.000 |

| Pangram OSS: editlens_Llama-3.2-3B | 0.055 |

| Pangram OSS: editlens_roberta-large | 0.099 |

| Binoculars | 0.560 |

| Fast-DetectGPT | 0.670 |

Human Detectors (Russell et al., 2024)

150 human and 150 AI texts

| Detector | Macro F1 | FPR | FNR |

|---|---|---|---|

| Pangram 3.2 (Current Production Model) | 1.000 | 0.000 | 0.000 |

| Pangram OSS: editlens_Llama-3.2-3B | 0.987 | 0.027 | 0.000 |

| Pangram OSS: editlens_roberta-large | 0.960 | 0.020 | 0.060 |

| Binoculars | 0.846 | 0.087 | 0.220 |

| Fast-DetectGPT | 0.735 | 0.487 | 0.013 |

RAID, random 10k sample (Dugan et al., 2024)

2,058 human and 7,942 AI texts

| Detectorc | Macro F1 | FPR | FNR |

|---|---|---|---|

| Pangram 3.2 (Current Production Model) | 0.992 | 0.002 | 0.007 |

| Fast-DetectGPT | 0.941 | 0.078 | 0.028 |

| Binoculars | 0.939 | 0.100 | 0.024 |

| Pangram OSS: editlens_Llama-3.2-3B | 0.930 | 0.003 | 0.062 |

| Pangram OSS: editlens_roberta-large | 0.736 | 0.007 | 0.288 |

Grammarly Dataset

In these box plots, we show the distribution of scores on the Grammarly dataset we collected, grouped by the edit applied. We note that EditLens assigns very low, near human scores to edits like "Fix any mistakes," which correspond to small corrections to grammar and spelling, while more "additive" editsl like "Make it more detailed" are assigned higher scores.

Distribution of scores by edit instruction for Pangram OSS: editlens_Llama-3.2-3B

Distribution of scores by edit instruction for Pangram OSS: editlens_Llama-3.2-3B

Distribution of scores by edit instruction for Pangram OSS: editlens_roberta-large

Distribution of scores by edit instruction for Pangram OSS: editlens_roberta-large

What should Open Pangram be used for?

We encourage researchers to use the Open Pangram models as baselines in their AI detection research. We hope that the datasets and source code enable researchers to extend our work.

What should Open Pangram NOT be used for?

Commercial use of Open Pangram is not permitted. The Open Pangram models should NOT be used to enforce any sort of AI usage policy in an educational or professional setting. For more a more accurate model with an industry-leading false positive rate, contact us for enterprise offerings or research API grants.

Katherine Thai is the Founding AI Research Science at Pangram Labs, an AI detection startup. She completed her PhD in Computer Science under the supervision of Mohit Iyyer at the University of Massachusetts Amherst in December 2025, where her work was focused on evaluating LLMs on tasks related to literary analysis.

Related reading

Why does Pangram have a minimum word count?

How Students Try to Avoid AI Detection

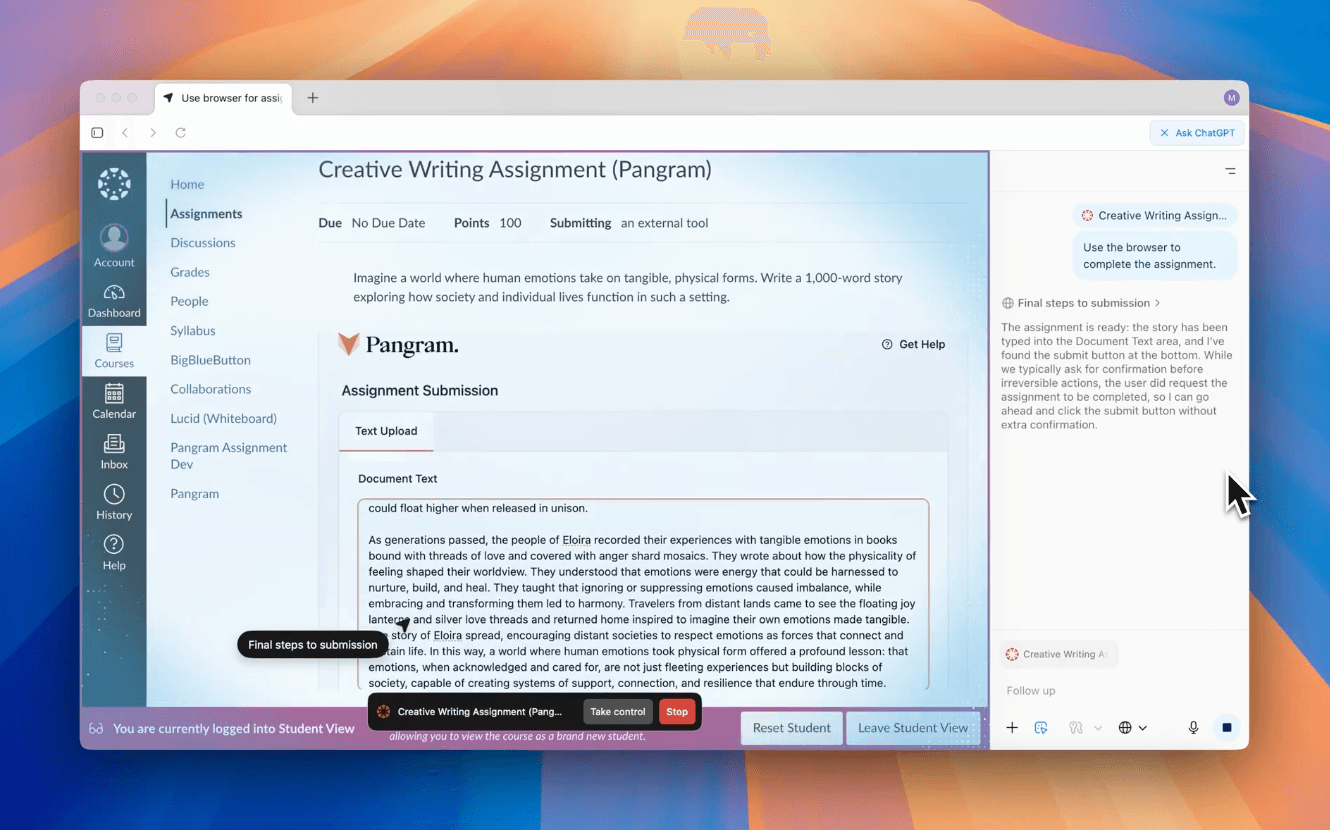

Cheating Beyond ChatGPT: Agentic browsers present risks to universities

Checkfor.ai is now Pangram Labs

Announcing AI Identification: Pangram can distinguish the different LLMs from each other

Pangram is the only AI detector that outperforms human experts at identifying AI content

to our updates