Instantly know what's human and AI on Twitter, LinkedIn, Substack and more. Get our new chrome extension.

AI Watermarking: Why Big Tech is Betting on AI Provenance, and Losing

Wherever internet users turn, they are accosted by AI-generated text, videos, and images, many of which are undetectable. The sanctity and trust of online spaces is crumbling under the weight of AI-content. Biden’s AI safety executive order and the European Union’s AI Act advocated proper labeling of AI output, also known as “watermarking,” but these regulations were undone in 2025. Despite no national or international strategy, many AI companies are still developing and deploying “watermarking” signals in their content. While this is a step in the right direction to regain public trust and protect consumers, watermarking is a flawed method that is easily bypassed.

Below, we will explore how watermarks work, why they fail and why good AI detection methods must rely on a specific type of pattern recognition.

AI watermarking is detected, but not seen

To humans, AI watermarking is invisible. Instead of a traditional watermark displaying a company’s name or logo, AI watermarks are embedded into an AI model's text, image, and video output in an imperceptible way.

Google uses SynthID, which embeds subtle variations in Gemini's output, detectable by Google's own technology. For text, SynthID alters the predictability scores of certain words according to a pseudorandom function, giving Gemini a preference for using certain words. Thus, when the text is fed back into the model, it can recognize its own handiwork based on word frequency analysis.

Fully AI-generated content cannot be copyrighted under U.S. law, so it's in AI companies' interest to develop a method for determining the provenance of their model's content. That way, once the AI-generated text, image or video gets circulated around the web, there is a signature to identify where it came from.

Claiming stake to their model’s creations isn’t the only reason Big Tech has such a desire for provenance. As internet users gawk at the never ending pile of AI slop delivered to their doorsteps daily, major AI developers fear the legal, reputational and security risks that could come their way. Bad actors use AI tools to create deepfakes or spread misinformation, threatening global security. To avoid a potential crackdown in the shape of strict government mandates, big tech aims to get ahead of the game by building tools to self-regulate. If their models can decode content just as easily as they can generate content, why would any government entity need to monitor their development?

Bad news: watermarking is not foolproof

If everyone in the world promised to never alter AI content, maybe watermarking would work. But in this world, bad actors can easily alter AI-generated content in a way that obscures its origins. Secondary paraphrasing tools, like humanizers, can easily change words or sentence structure, inserting flaws or gibberish in an attempt to disguise AI-generated content. Unfortunately, sometimes this flimsy disguise works. Pangram Labs tested 19 different AI humanizers and found that many successfully removed watermarking from text.

Bad actors can also launder text by running it through multiple different translations before back-translating it into English, removing watermarks and creating a clean piece of writing that will not flag the AI companies’ decoding systems.

Watermarks are delicate and easily tampered with, making them useless. Detection requires other tools.

Statistical pattern recognition

The companies who are responsible for AI-generated slop cannot be solely responsible for detecting when a piece of content is theirs. Watermarking requires the cooperation of AI developers and can be removed in the blink of an eye.

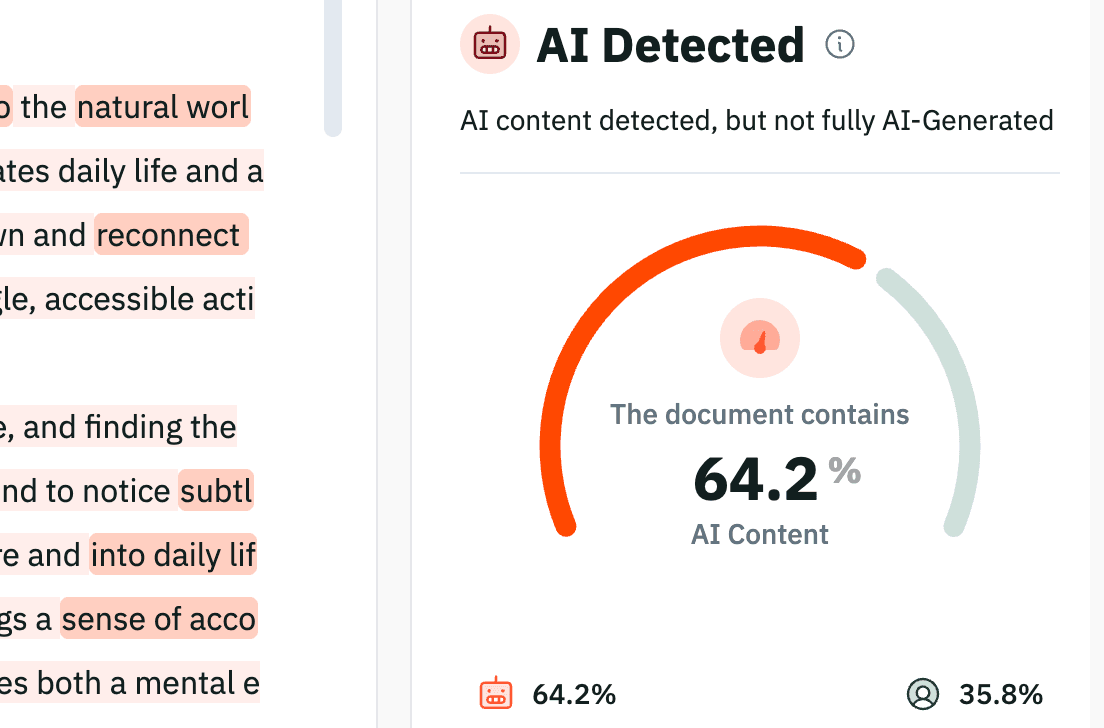

But where one method fails, another prevails. A method called independent statistical pattern recognition is far more robust and reliable for identifying AI-generated content, which is why we use it here at Pangram. We don't look for a hidden code embedded in AI-generated text. Instead, Pangram models analyze the fundamental linguistic DNA, structural predictability and syntax patterns of any given text for 99.98% accurate AI detection. Advanced detectors like Pangram don’t need watermarks to function. They are trained on diverse datasets through a method called hard negative mining, in which the detectors are fed hard-to-detect examples. Pangram can flag AI-generated content from open-source or unreleased models, identify maliciously modified text, and offer line by line analysis on mixed AI-generated and human written pieces.

From self-policing and to independent detection

AI companies will not become the champion of internet authenticity. Watermarking is a strategy that does not stand up to bad actors, and independent detection is the only real solution.

Our models are already being used in practice. Platforms that rely on user trust, like Quora have integrated API-level detection into their service, using Pangram to moderate millions of posts and flag AI-generated content.

True authenticity and transparency on the internet can only occur with a detection-based framework. Reliable, independent, and state of the art detectors like Pangram are helping to create checks and balances for the entire internet.

Whether you are an academic, a professional, or an IT developer: watermarking is not your silver bullet. Protecting against academic dishonesty or AI spam content requires detection. To accurately verify the origins of essays, emails, and social media posts, organizations must use detection tools that analyze text, rather than watermarking.

Don't rely on watermarks to protect your platform. Identify altered AI-generated content instantly with Pangram.

Related reading

The Challenge of Assessment

How To Build AI Ethics Frameworks in Journalism

Do College Admissions Offices Check for AI?

Useful AI policies for educators

Yes, AI detection can be accurate

Essay Checkers, AI, and Plagiarism

to our updates