Saiba imediatamente o que é publicado por pessoas e o que é gerado por IA no Twitter, LinkedIn, Substack e outras plataformas. Obtenha a nossa nova extensão para o Chrome.

Índice

Anunciamos o Pangram 3.2

Atualização: O Pangram 3.3 é agora a versão mais recente — veja as novidades do Pangram 3.3.

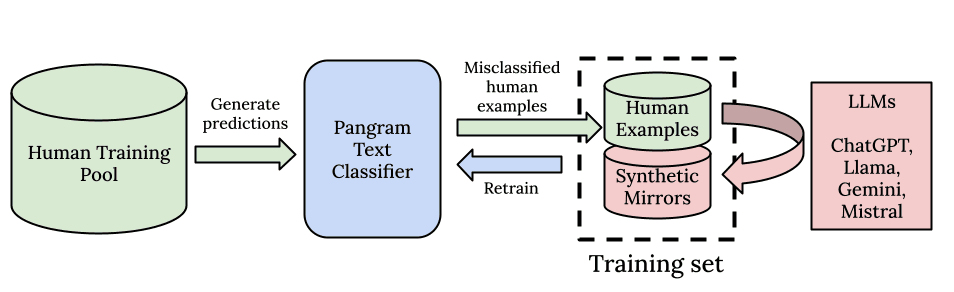

A Pangram está muito entusiasmada com o lançamento de um novo modelo de deteção de IA, o Pangram 3.2. Tal como os seus antecessores, o Pangram 3.1 e o Pangram 3.0, este modelo baseia-se na arquitetura EditLens descrita no nosso artigo apresentado na ICLR 2026. O que os nossos utilizadores podem esperar é uma melhoria incremental, mas notável, no número de verdadeiros positivos que o detetor consegue identificar (a taxa de recuperação), mantendo a mesma taxa de falsos positivos, uma das mais baixas do setor, garantindo que as falsas acusações de utilização de IA continuam a ser extremamente raras.

Ficha de modelo

Seguindo as melhores práticas de lançamento de modelos de linguagem de grande escala (LLM), decidimos começar a publicar «Fichas de Modelo» em conjunto com as atualizações do nosso detetor: trata-se, essencialmente, de «rótulos nutricionais» para modelos de IA. As nossas fichas de modelo descrevem a arquitetura e a estrutura de treino, detalhes sobre o conjunto de dados de treino, resultados de avaliação relevantes e alterações efetuadas que possam ter impacto no comportamento do detetor. Descrevemos também as especificações exatas das entradas e saídas do modelo, as línguas suportadas e os tipos de condições em que esperamos que o Pangram tenha um bom desempenho, bem como as situações em que é mais limitado.

O que esperar

Provavelmente irá notar que o Pangram 3.2 é mais sensível do que o Pangram 3.1. Por outras palavras, serão detetados mais textos gerados por IA. Isto deve-se a melhorias na deteção do Humanizer, na deteção do Claude 4.6, na sensibilidade na deteção de textos mais curtos gerados por IA, à adição de mais dados ao conjunto de dados de treino e a hiperparâmetros mais otimizados na arquitetura do EditLens.

O que melhorou

Detecção de Humanizer e Prompting Adversarial

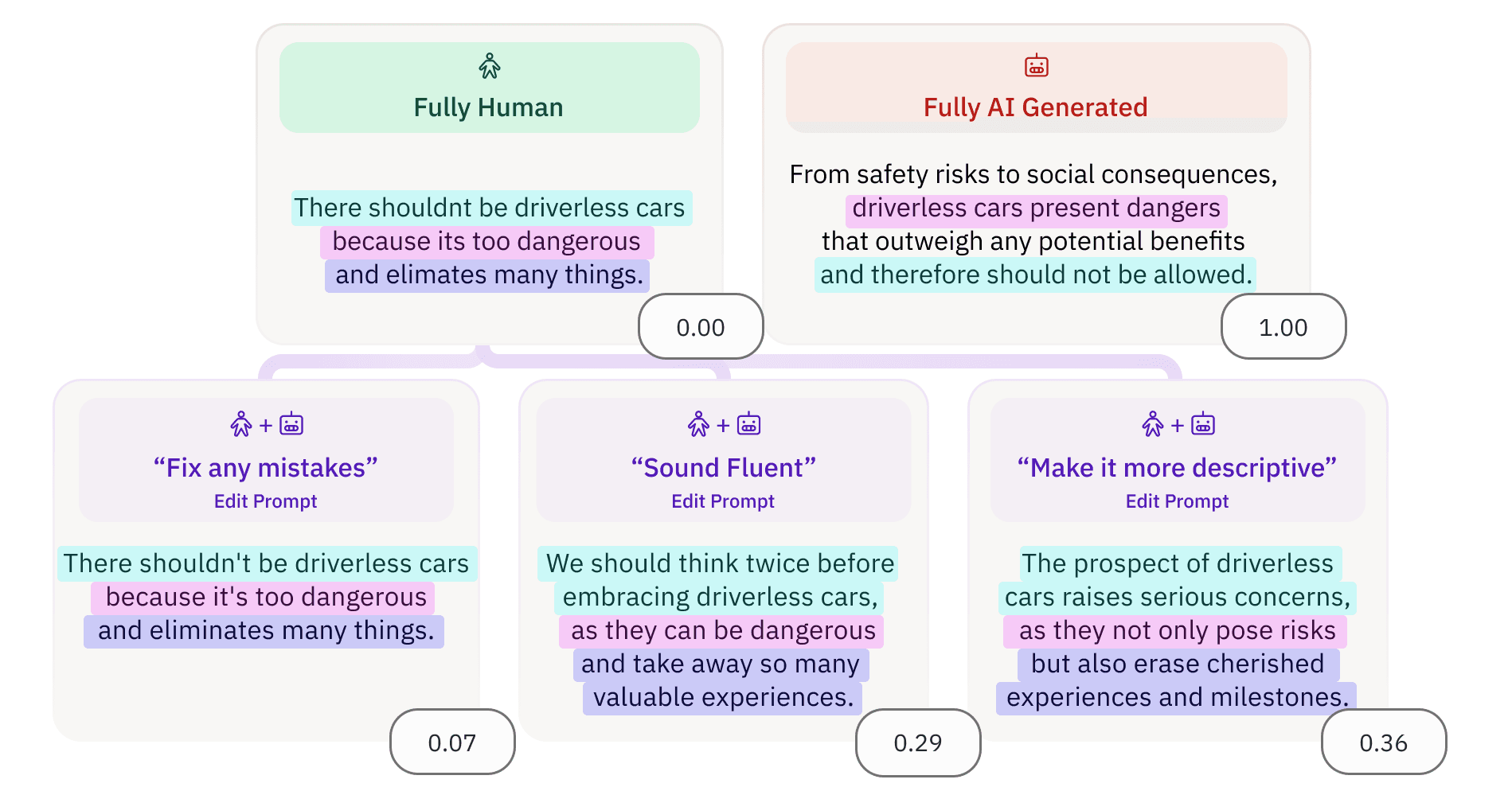

A maior melhoria introduzida no Pangram 3.2 é a sua capacidade de detetar texto gerado por IA com um toque humano. No nosso conjunto interno de avaliação de humanizadores, melhorámos a taxa de deteção de humanizadores em 4 vezes, em comparação com o Pangram 3.1. Observamos também uma melhoria de cerca de 3 vezes na nossa avaliação interna de «prompts adversariais», que são textos gerados por um modelo de linguagem que foi instruído a adicionar erros intencionalmente e a escrever num estilo que evite a deteção por IA.

Isto é particularmente importante no âmbito da educação, onde os alunos utilizam cada vez mais ferramentas de humanização ou tentam orientar os modelos de linguagem de forma a evitar que o texto resultante pareça «demasiado gerado por IA».

Detecção de publicações curtas nas redes sociais geradas por IA

Dada a viralidade do nosso bot do X, que as pessoas têm utilizado para verificar se os tweets contêm texto gerado por IA, temos-nos concentrado intensamente, nos últimos tempos, em melhorar a deteção de conteúdos curtos online, com o tamanho de um tweet. Também reduzimos o número mínimo de palavras de 75 para 50, uma vez que nos sentimos mais confiantes na nossa capacidade de distinguir publicações geradas por IA com um comprimento entre 50 e 75 palavras.

Com uma taxa de falsos positivos equivalente à do Pangram 3.1, melhorámos a taxa de falsos negativos em publicações curtas nas redes sociais em 17% no Pangram 3.2.

Melhorias na versão 4.6 do Claude

Vários utilizadores relataram falsos negativos especificamente com o Claude Opus 4.6. Resolvemos esta questão regenerando o nosso conjunto de dados, incluindo dados do Claude Opus 4.6. Após a avaliação nos nossos conjuntos de dados de teste internos (exemplos particularmente difíceis) e através de testes de simulação de ataques (red teaming), estamos agora confiantes de que o Pangram é capaz de detetar o Claude Opus 4.6 tão bem como qualquer outro LLM de ponta.

O que vem a seguir

Matemática, programação e ciências geradas por IA

Atualmente, o código e a matemática gerados por IA não são detetados com um elevado nível de precisão. Estamos neste momento a concentrar-nos nestes casos de utilização devido à elevada procura por parte dos clientes. Embora a matemática e o código sejam mais formulaicos e, por isso, mais difíceis de detetar do que os textos gerados por IA, algumas das nossas primeiras experiências estão a revelar resultados promissores.

Iteração contínua sobre humanizadores

O mercado dos humanizadores está em constante evolução e, nos últimos meses, surgiu uma variedade cada vez maior de humanizadores. Estamos a desenvolver técnicas mais avançadas para detetar humanizadores, que esperamos poder partilhar publicamente em breve.

O Futuro

A Pangram está empenhada em manter-se sempre na vanguarda do que é possível alcançar com a deteção por IA. Estamos em constante evolução, à medida que as capacidades dos modelos de linguagem de grande escala (LLM) continuam a aumentar.

Também estamos a contratar! Visite a nossa página de carreiras para nos ajudar a criar os melhores detectores de IA do mundo.

Katherine Thai é a investigadora científica fundadora na área da IA na Pangram Labs, uma startup especializada em deteção de IA. Concluiu o seu doutoramento em Ciências da Computação sob a orientação de Mohit Iyyer na Universidade de Massachusetts Amherst em dezembro de 2025, onde o seu trabalho se centrou na avaliação de modelos de linguagem de grande escala (LLMs) em tarefas relacionadas com a análise literária.

Leitura relacionada

Os detetores de IA conseguem detectar o GPT-4.5?

O Pangram detecta o Llama 4 da Meta?

Relatório técnico sobre detecção de texto gerado por IA com alta precisão

Como detectar IA no Google Docs

Qual é o desempenho do Pangram em humanizadores? (Atualizado em agosto de 2025)

Pangram 3.0: Quantificando a extensão da edição por IA em textos

para receber as nossas atualizações