人工知能の影響を受けない業界はもはや存在しない。

医師や弁護士からマーケティング担当者、ソーシャルメディアのインフルエンサーに至るまで、あらゆる職業がAI生成コンテンツの影響を受けている。では、ジャーナリストはどうだろうか?この職業は読者の信頼に支えられている。しかし、最近の調査によると、業界内では規制のないAI利用が急増しており、AIが生成した記事が、広く読まれ信頼されている新聞の紙面にも掲載されるようになっている。他の業界が倫理的価値観を見直し、AIの適切な利用方法を模索しているように、ジャーナリズムも同様に取り組む必要がある。 AIツールは反復的な作業を自動化し、データに基づいたストーリーテリングを支援できますが、この技術はバイアスや「幻覚」によって機能不全に陥ることがあります。適切なAI利用に関する法的拘束力のある業界全体の基準が欠如しているため、各報道機関はこれらの未開拓の領域を独自に模索せざるを得ない状況にあります。以下では、報道現場におけるAIの倫理的な利用を評価するための現在の枠組みを検討し、その課題を分析した上で、透明性と人間の監督を中核とする統合的な倫理的アプローチを提案します。

断片的で曖昧な指針によって形作られる風景

AI技術の進展が加速する一方で、倫理的なガバナンスはそれに追いついていない。しかし、信頼されるいくつかの国際機関が、この遅れを取り戻すための取り組みを主導している。これらの機関は、編集局が編集上の主導権と読者の信頼を取り戻せるよう支援しようと努めているが、一方で、現代の編集者、出版社、そして執筆者が直面するあらゆるジレンマに対処することには依然として苦慮している。

国連教育科学文化機関(ユネスコ)は2021年、人間の監督を中核とするAIの倫理に関する勧告を採択した。 コミュニケーションおよび情報産業に関する勧告を概説するセクションでは、ジャーナリストに向けた指針が示されている。この方針では、AIシステムが情報の自由、表現の自由、透明性、および公的データの開示を促進すべきであると定めている。また、メディア業界のあらゆる関係者が、倫理的な方法で業務にAIを取り入れることを奨励している。さらに、この枠組みでは各国に対し、メディアがAIの弊害と利点について報道できる透明性のある教育環境を促進すること、そして消費者が誤情報やヘイトスピーチに対抗するためのデジタルリテラシーやメディアリテラシーのスキルを身につけられるよう支援することを求めている。

しかし、ユネスコのAI倫理方針をまとめた44ページのうち、メディアに関する枠組みが占めるのはわずか4段落に過ぎない。ジャーナリズムやジャーナリストについて具体的に言及されている箇所はほとんどなく、この方針はむしろ価値観の表明であり、将来的により包括的な方針を策定するよう求める一般的な要請として機能している。メディアが拠り所とできる内容はほとんどない。

米国電気電子学会(IEEE)も同様のアプローチをとった。同会の「倫理に則った設計(Ethically Aligned Design)」フレームワークは、人工知能(AI)に関する問題や、人間の判断と監視の重要性を一般市民に啓発する記者の自由を後押しするものである。しかし、この方針もまた、理想や価値観を述べたものに過ぎず、ニュースルームにおけるAIのジレンマに対処するための具体的な指針とはなっていない。メディアはAIの利用開示をどのように扱うべきか?あるいは、不適切な利用にどう対処すべきか? 不適切な利用とは何を指すのか?こうした不確実性はニュースルームを混乱させ、視聴者の信頼を宙吊りにしているが、強固な方針があれば、この未踏の領域を歩むための道しるべとなるだろう。

今後どう進むべきか:倫理的なジャーナリズムAI方針の基本原則

出版社、編集者、ジャーナリストは、ニュースルームにおけるAIの活用事例を規制し、周知し、適切に正当化するための、具体的かつ徹底的で、倫理的に妥当な指針を切実に必要としている。厳格かつ十分な情報に基づいたAIポリシーを策定するにあたり、ニュースルームは、透明性、説明責任、包摂性、公平性という4つの譲れない原則を指針の基盤とすべきである。

以下は、ニュースルームにおけるAIの活用にこれらの原則を適用するための出発点となります:

- 透明性とは、AIの利用について、読者に対して明確かつ誠実で、分かりやすい形で開示することを意味します。ジャーナリストがAIをリード文の作成、調査、あるいはビジュアル制作に利用する場合であっても、読者は常にその事実を知る権利があります。

- 説明責任とは、 AIが生成したコンテンツや調査における誤りに対して責任の所在を明確にすることです 。ニュース編集局は、AIの利用に問題が生じた場合、誰が責任を負うべきかを明確に定義する必要があります。AIプログラムの開発者でしょうか?ジャーナリストでしょうか?それともアルゴリズムそのものでしょうか?適切な説明責任とは、誤りを認め、責任ある当事者に責任を問うことを意味します。したがって、誰が責任を負うべきか、またその状況をどのように対処すべきかを決定するのは、ニュース編集局の役割です。

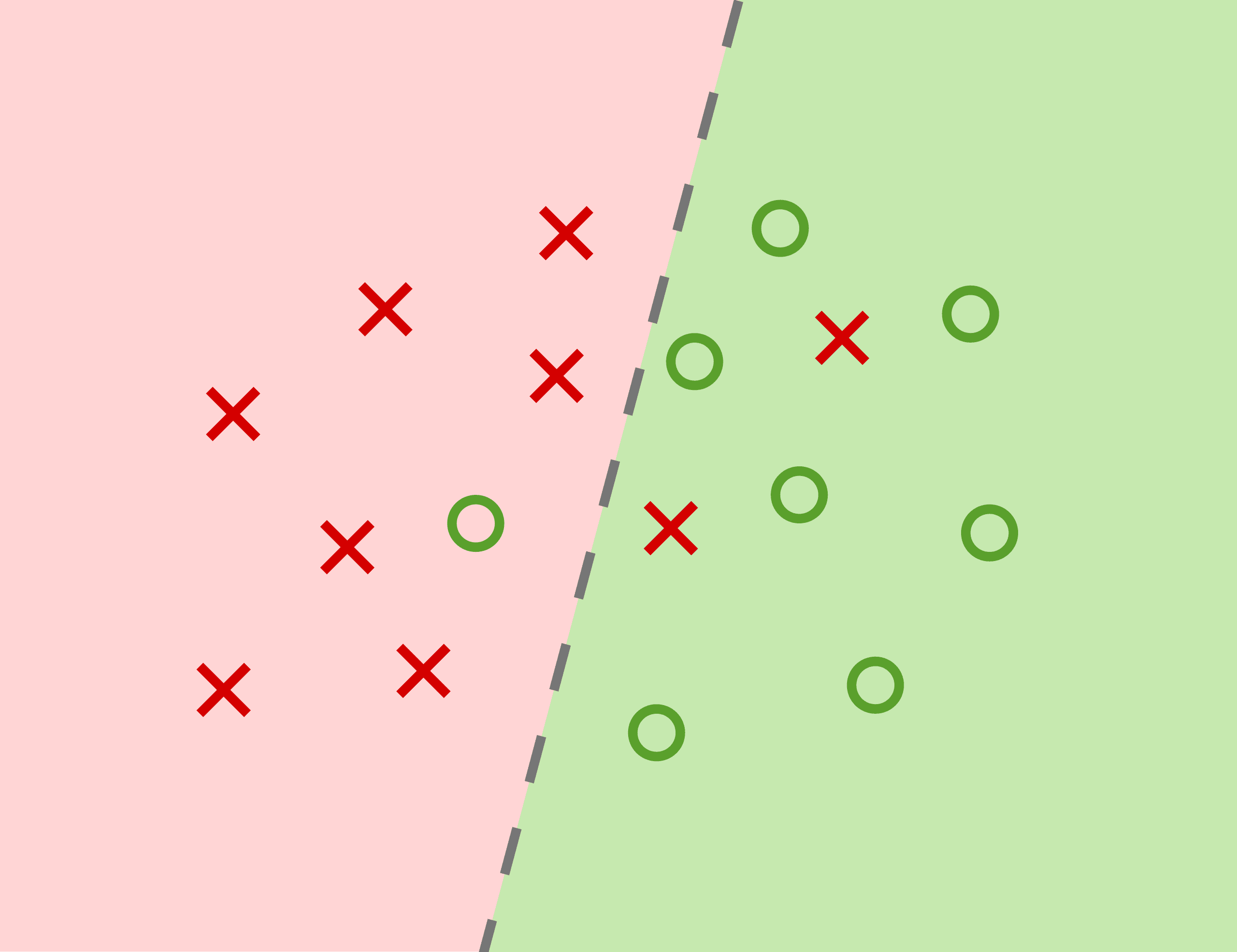

- 「インクルーシビティ」とは、 AIツールのバイアスに対する認識を高め、その影響を軽減することを意味します 。AIアルゴリズムの学習データはアルゴリズムの出力を決定づけるため、AIツールは、それが構築された社会に存在するバイアスを増幅させてしまうリスクがあります。ニュースルームにおけるAIの利用においては、常にこの点を考慮すべきであり、方針においては、このバイアスを調査し、最小限に抑えるための戦略を盛り込む必要があります。

- 公平性とは 、本方針を公平に適用することを意味します 。ニュースルームの各チームは、定められた方針を厳格に遵守し、必要に応じて更新を実施すべきです。適用は一律に行われるべきであり、方針からの逸脱には相応の措置が講じられ、違反については視聴者に対して明確に開示されなければなりません。

アルゴリズムのバイアスと「ピンク・スライム」の脅威

倫理的な指針なしに導入されたAIモデルは、社会的不平等や社会的弱者に対する固定観念を助長する恐れがあるほか、ユーザーの既存の信念を強化し、分断を深刻化させる恐れがある。ユネスコがAIによって生成される固定観念に関する調査を行ったところ、大規模言語モデルは、男性に比べてはるかに高い割合で女性に家事や育児などの役割を割り当てるとともに、同性愛者や特定の民族グループに関する否定的なコンテンツを生成することが判明した。スタンフォード大学の研究者らは、アフリカ系アメリカ人の英語を話す人々を記述するよう指示されたAIモデルが、公民権運動以前の時代に由来する極端な人種差別的ステレオタイプを永続させていることを明らかにした。 必然的に欠陥を抱えるAIシステムを使用することで偏見が固定化されるリスクは、翻訳、データ分析、記事のアイデア生成など、報道機関がこれらのツールに委ねている業務にも浸透する可能性がある。さらに、ニュースを含むパーソナライズされたコンテンツを提供するように設計されたAIレコメンデーションシステムは、読者が同意するコンテンツばかりを表示し続け、世界に対する既成概念を強固にし、視野を広げるような異なる視点や情報への関心を閉ざしてしまう恐れがある。

インターネット上に溢れかえるAI生成コンテンツは、読者を混乱させ、従来のニュースメディアに対する信頼を損なう恐れもあります。AI検出企業Pangramの調査によると、毎日6万件ものAI生成ニュース記事が公開されており、特にテクノロジー、美容、ビジネスの分野で顕著です。 質の低いニュースサイトや悪質な業者は、広告収入を得ることを目的として、AIを活用し、ほぼゼロコストで「ピンク・スライム」と呼ばれる質の低いコンテンツを大量に量産し続けている。こうしたコンテンツファームはニュース界を濁らせているため、信頼できる専門的なニュースサイトが確固たるAIポリシーを策定し、読者に開示することがこれまで以上に重要となっている。

事例研究:先駆的な取り組みを進める報道機関とは?

『ニューヨーク・タイムズ』、BBC、プロパブリカといった主要な報道機関は、方針の最優先事項として人間の監督を位置づけ、アルゴリズムから主導権を取り戻し、信頼できる編集者の手に委ねることで、イノベーションと倫理のバランスをうまく取っている。 例えばBBCは、コンテンツの制作、提示、配信においてAIを使用する場合、スタッフがその旨を明確に示すことを義務付けています。同局のガイドラインでは、AIツールによる出力結果に含まれる固有のバイアス、誤った情報、盗用についても、配慮と監視を行うよう求めています。全スタッフおよびフリーランサーは、AIを使用する際、上級編集責任者に提案書を提出し、承認を得なければなりません。 また、BBCのガイドラインでは、AIの適切な活用事例として、関連するニュース記事内でAIの出力を示すことや、匿名を希望する情報提供者の声を加工することなどが挙げられている。ProPublicaは、重要な調査報道において大規模なデータベースを精査し、他のシステムに存在するバイアスを暴くためのパターンを特定するために、AIを明示的に活用している。ニューヨーク・タイムズ紙は、見出しや要約の作成支援、記事の音声版生成、データ分析においてAIを活用していることを明らかにしている。

実践:報道機関が倫理を徹底させる方法

「プロフェッショナル・ジャーナリスト協会(SPJ)」の倫理規定に合致した、適切かつ徹底したAIガイドラインを策定するため、報道機関は技術的専門知識と倫理的専門知識の両方を兼ね備えた学際的なチームを結成し、AIによって大きく変化したこの新たな世界においてあらゆる側面を網羅すべきである。AIの利用においては、人間の監督が常に中心に置かれるべきである。ニュース記事で使用されるAIの出力結果は、その正確性について継続的に検証される必要がある。

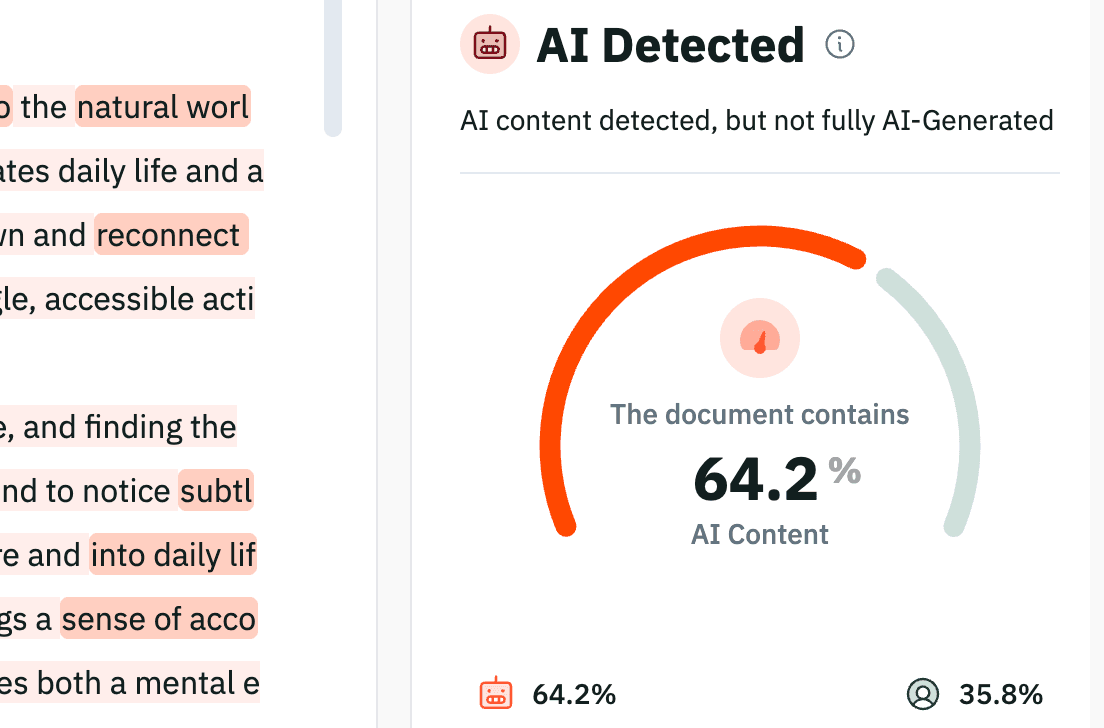

AIは人間の能力を補完するものであり、綿密な調査に基づいた信頼性の高いジャーナリズムの代わりとなるべきではありません。方針を徹底し、遵守を確保するため、報道機関はPangramのような検証ツールを導入し、開示されていないAI生成コンテンツや不適切な事例を検知すべきです。AIツールは魅力的な効率性を提供しますが、優れた報道機関が持つような信頼性や倫理観を必ずしも維持できるわけではありません。透明性と説明責任に根差した枠組みを採用することで、報道機関は報道の民主的機能を損なうことなく、AIツールを活用することができるのです。

AIが普及した現代において、貴社の出版物が最高水準の信頼性を維持できるよう、今すぐコンテンツの真正性を確認してください。

関連記事

を購読して、最新情報を受け取りましょう