AI検出スコアは何を意味するのでしょうか?

学生のレポートやフリーランサーの記事をAI検出ツールにかけたところ、画面に「AI検出率65%」という大きな数字が表示されました。さて、次にどうしますか?

AI検出スコアは、従来の評価基準のように「合格」や「不合格」が明確なものではありません。「完全にAI生成されたもの」と「AIで編集されたもの」との微妙な違いは変化し続けており、Pangramの検出システムも同様に進化しています。

このガイドでは、単純なパーセンテージをわかりやすい言葉で解説します。AIスコアチェッカーがどのようにパーセンテージを算出するのか、信頼区間とは何か、そしてアクションを起こすのに適切なAI検出器の閾値をどのように決定するかについて説明します。

その割合は実際には何を示しているのでしょうか?

AI検出ツールで文書をスキャンすると、AI検出率のスコアが表示されます。例えば「50%」といった具合です。このパーセンテージは、文書の50%が偽物である、あるいはAIによって生成されたという意味ではありません。これは、AI検出ツールによると、この文書の50%にAIによって生成された、あるいはAIの支援を受けて書かれた文章が含まれていることを意味します。

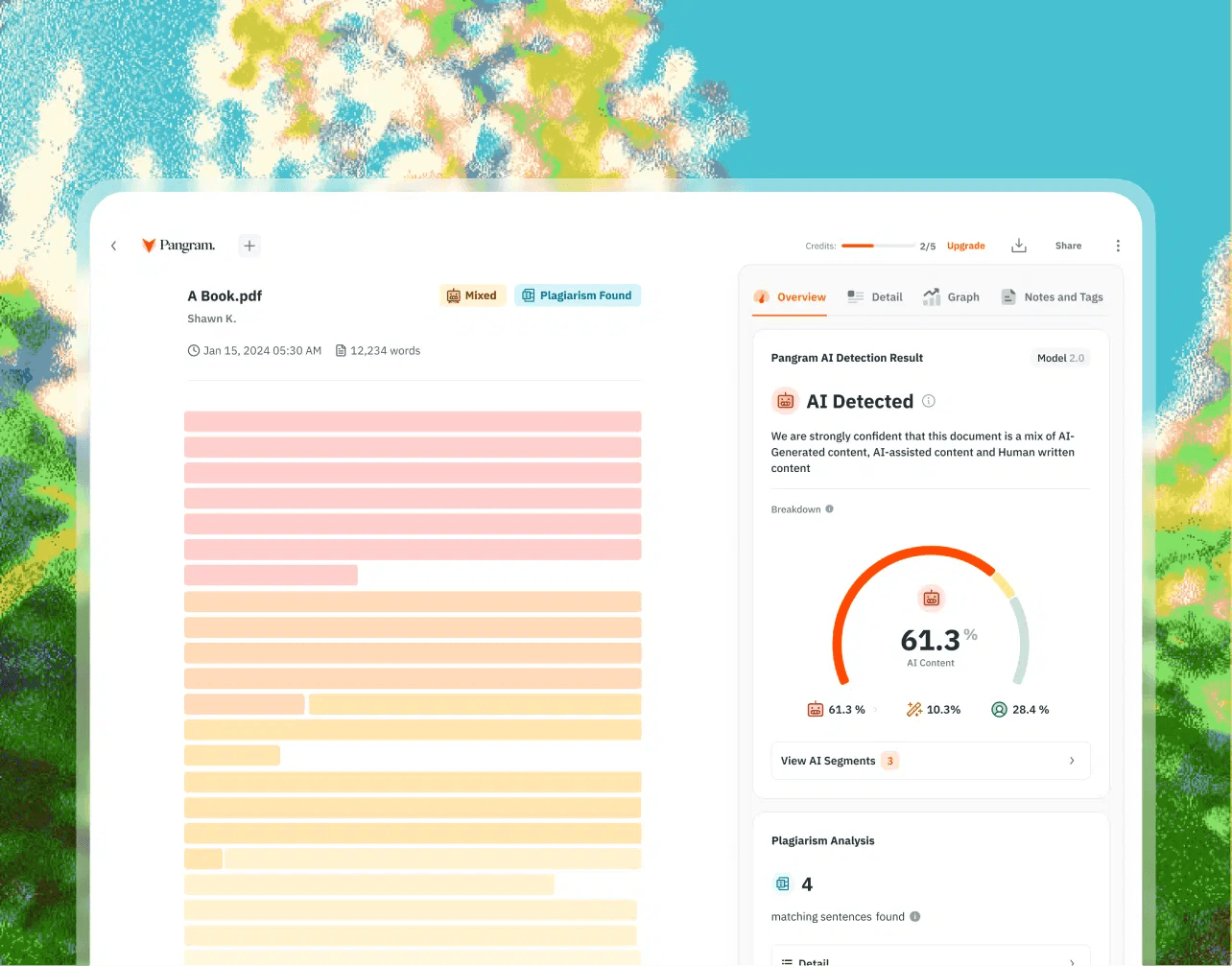

AI検出スコアの例

高度なAI検出ツールは、文書を単一の巨大なテキストブロックとして扱うことはありません。むしろ、これらのツールはテキストをセグメント、文、段落に分解します。これらの個々の単位は、セグメントとしてスコア付けされます。

10ページの論文で30%というスコアが出た場合、それはおおよそ3ページ分のテキストに、LLMに典型的なパターンが見られることを意味します。こうしたパターンには、バースト性の欠如や予測可能な構文などが含まれます。このスコアは、文書全体の30%がAIによって生成されたことを意味するものではありません。

AIスコアの分布図の読み方

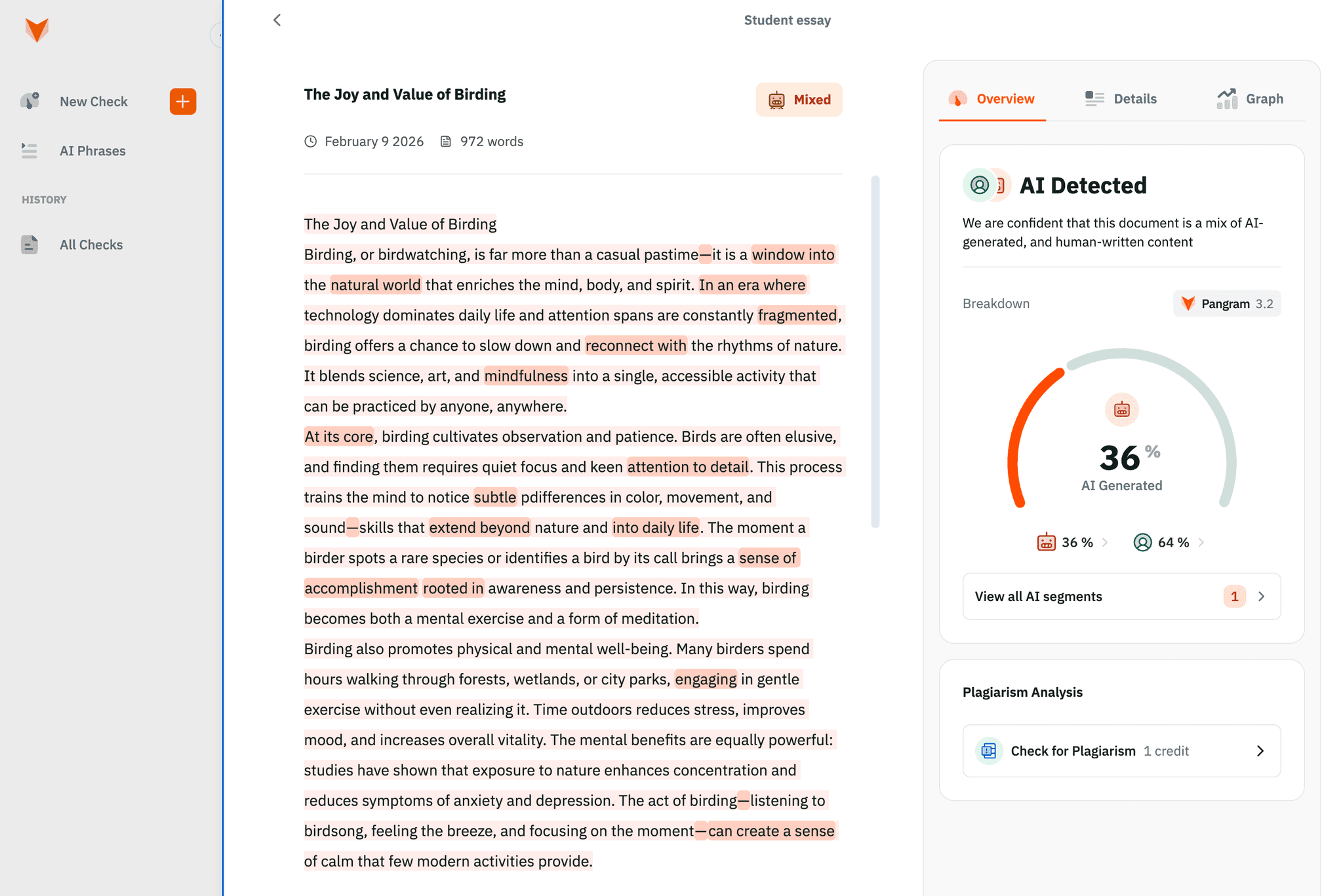

AI検出ツールを使用していて、特定の文書が低いスコア(例えば、AI生成率30%)を示した場合、それは通常、ハイブリッド文書であることを示しています。ハイブリッド文書とは、通常、人間が執筆した後に、AIツールの支援を受けて編集された文書を指します。一方、85%のような高いスコアが出た場合は、そのテキストが完全にAIによって生成されたものである可能性が極めて高いと言えます。

AI生成コンテンツと人間が執筆したコンテンツの混合

AI検出スコアが低~中程度になるのは、執筆者が次のような場合によく見られます:

- Grammarlyなどのツールを使用しています。

- ChatGPTのようなLLMに対し、作成した段落を「滑らかにする」よう依頼する。

- AIを活用して、母国語を英語に翻訳しています。

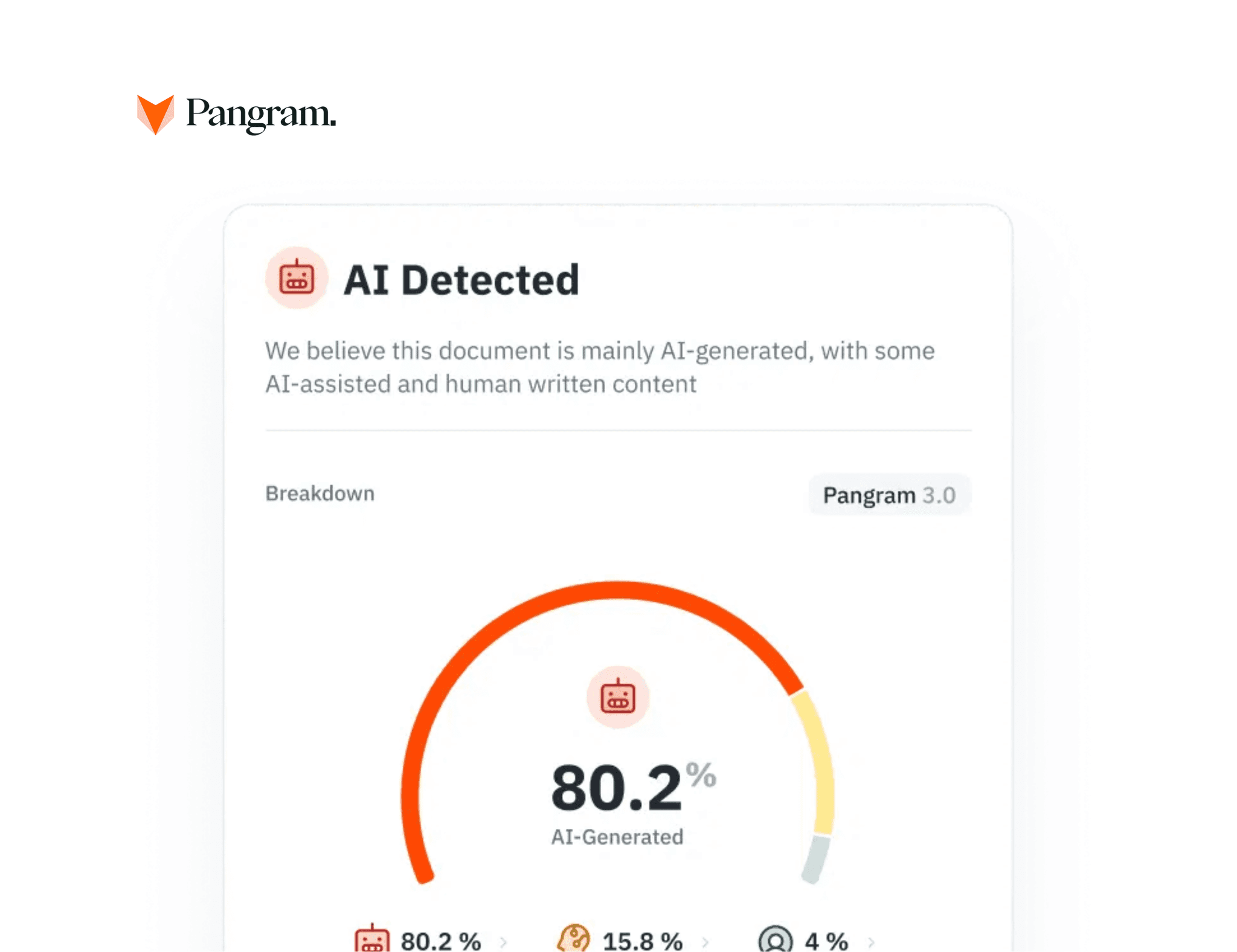

テキストの言語的特徴が圧倒的にAI生成によるものである場合、AI検出率が高くなることがよくあります。これは通常、そのテキストの執筆者がLLMにプロンプトを入力し、LLMが直接生成した出力を多少編集した上でコピー&ペーストしたことを意味します。

AIスコアチェッカーは正確なのか?

他ツールの結果については検証できませんが、Pangramのようなエンタープライズ向けAIチェッカーは極めて高い精度(99.98%)を誇ります。その精度を判断しやすくするため、ほとんどのエンタープライズ向けAIチェッカーには、モデルが自身の検出率をどの程度確信しているかを示す「信頼区間」が表示されています。

「AI検出ツールは正確か」という問いに対する答えは、2つの事実に基づいています。すなわち、あるテキストがAIによって生成されたものかどうかを判断するために統計モデルが用いられており、これらのモデルは絶対的な確実性ではなく、確率に基づいて動作するからです。

「高信頼度」のフラグは、そのテキストが既知のLLMトレーニングデータのパターンと一致していることを意味します。そして、テキストが既知のLLMトレーニングデータのパターンと一致しているため、AI検出率は妥当な数値となります。これは、AI検出率が絶対的に正確であることを意味するわけではありませんが、おそらく正確であると考えられます。

「信頼度低」のフラグは、テキストにAIによる生成の特徴が一部見られるものの、モデルが確定的な判断を下すのに十分なデータを持っていないことを意味します。「信頼度低」のフラグの多くは、テキストの断片が短すぎて正確な評価ができないことが原因で発生します。

文章の内容を単純な「AIか否か」という二元論で評価するAIチェッカーを使用している場合、Pangramのようなツールを使えば、AIによる執筆を示唆する具体的な箇所を特定するのに役立ちます。

「まちまち」な結果

現代のワークフローにおいて最も一般的なのは、人間による執筆・編集とAIによる執筆・編集を組み合わせた「混合型」のコンテンツです。そのため、Pangram 3.0のようなツールでは、テキストを「完全な人間によるもの」、「AIによる軽度の支援」、「AIによる中程度の支援」、「完全なAI生成」というスペクトラム上で分類しています。

AIスコアの結果(混合)

AI生成テキストを段階的に分類することは重要です。なぜなら、スペルチェック機能を使用しただけで「AIによる軽微な支援」と判定され、10%の評価を受けた学生と、95%が「完全なAI生成」であるエッセイを提出した学生とを、同じように扱うべきではないからです。ハイライト表示された部分を見れば、どの部分がAIによって作成されたのかが正確にわかります。

どのようなAIスコアの閾値で対応が必要となるか?

是正措置を必要とする「魔法の数字」のような普遍的な基準は存在しませんが、ベストプラクティスとして、AI検出スコアが20%未満の場合は、通常、一般的なデジタルライティング支援ツールによるものと考えられます。一方、60%を超えるスコアの場合は、その文章の真正性について直接話し合う必要があることが多いでしょう。

AIポリシーに沿ったAI検出器の閾値を設定する必要があります。例えば、ポリシーで「アイデア出しにはAIを使用してもよいが、文章自体の作成には使用してはならない」と定めている場合、スコアが40%に達した場合は調査が必要です。あるいは、ポリシーで「執筆プロセスのいかなる段階においてもAIを使用してはならない」と定めている場合、スコアが15%であっても調査が必要となる可能性が高いでしょう。

表示されるAI検出スコアは、診断ツールとしてご利用いただけます。スコアが高くなった場合は、Pangramがハイライト表示した箇所や「AIフレーズ」レポートを活用し、執筆者と話し合い、執筆プロセスの説明を求めることができます。これにより、誤解の解消や適切な指導が可能となり、双方にとって望ましい成果が得られるでしょう。

AIによる検出は、単純な「合格/不合格」という二元的なものではない

Pangramは、現代の文章を形作るプロセスを可視化する、精緻な分析ツールです。AIスコアが何を意味するのかを正確に理解することで、専門家は信頼性を確保しつつ、執筆者を公平に扱うことができます。

数字の意味を推測するのはやめましょう。Pangramのセグメント分析を活用すれば、テキストの執筆状況について、詳細かつ平易な言葉で解説されたインサイトを得ることができます。

の更新情報を購読する