高等教育中的AI检测

面向高校的AI检测工具

借助专为高等教育设计的最精准AI检测工具,维护学术标准。以99.98%的准确率,清晰掌握AI使用情况,筛查研究论文,并维护院校声誉。

经马里兰大学和芝加哥大学等第三方机构评测,被公认为市场上经过验证、最可靠且最精准的AI检测工具。

及研究人员的信赖

学术诚信

防范

引发的学术不端行为,保障校园安全

维护科研诚信

防止人工智能生成的“劣质内容”渗入同行评审和资助申请。我们的模型即使在复杂的技术性学术写作中也能识别出人工智能生成的内容。

对国际学生的公平对待

避免对非母语者产生偏见。事实证明,Pangram 能够通过语言模式(而不仅仅是困惑度)来识别人工智能,从而保护非英语母语学生免受不实指控。

企业级隐私保护

完全符合《家庭教育权利和隐私法》(FERPA)及SOC 2 Type 2标准。我们绝不会使用您的学生论文或专有研究数据来训练模型。

功能

为什么大学应该使用全字母句(

)?

多年的研究

采用经过多年研发的专有技术,而非开源模式或披着品牌外衣的商业大型语言模型。

在多样化的数据集上训练

Pangram依托多样化数据集、硬负样本挖掘和主动学习技术,实现了业界领先的假阳性率(FPR),而非依赖于在实践中常失效的熵值和突发性指标。

检测所有大型语言模型

Pangram能够检测所有主流语言模型(包括ChatGPT、Claude、Gemini、Llama等)生成的内容,使其成为全面的人工智能检测解决方案。

我们的模型是基于学术写作和课程作业进行训练的,而不仅仅是营销或网络内容。这既能减少对合法学生写作的误判,又能保持对AI生成文本的高敏感度。了解教师如何使用Pangram进行作业核查。

我们提供细粒度的、基于句子和段落的高亮标记功能,能够区分由人工智能生成的段落与人工撰写的文本。这使教师能够做出更细致的判断,而非仅依赖简单的“通过/未通过”二元判定,这对多章节的学术作品尤为重要。

是的。Pangram 支持在技术性、分析性和叙述性写作风格中进行检测,因此适用于从实验报告、编程作业到论文、文献综述及政策文件等各类文本。

是的。Pangram 严格遵守美国教育机构要求的、符合《家庭教育权利和隐私法》(FERPA)的数据隐私和处理标准。学生提交的内容均经过安全处理,且不会用于训练外部模型。

高校可根据机构要求配置数据保留策略。数据可在分析完成后自动删除,也可保留以供审计和学术诚信审查工作流程使用。

是的。许多机构不仅将Pangram用于检测,还通过界定可接受与不可接受的使用场景,并在各部门间应用统一的审查标准,以此落实人工智能政策。

不。Pangram 是一款决策支持工具,而非强制执行机制。系统会提供结果的置信度评分和解释,以便教师和学术诚信委员会能够做出明智的判断。

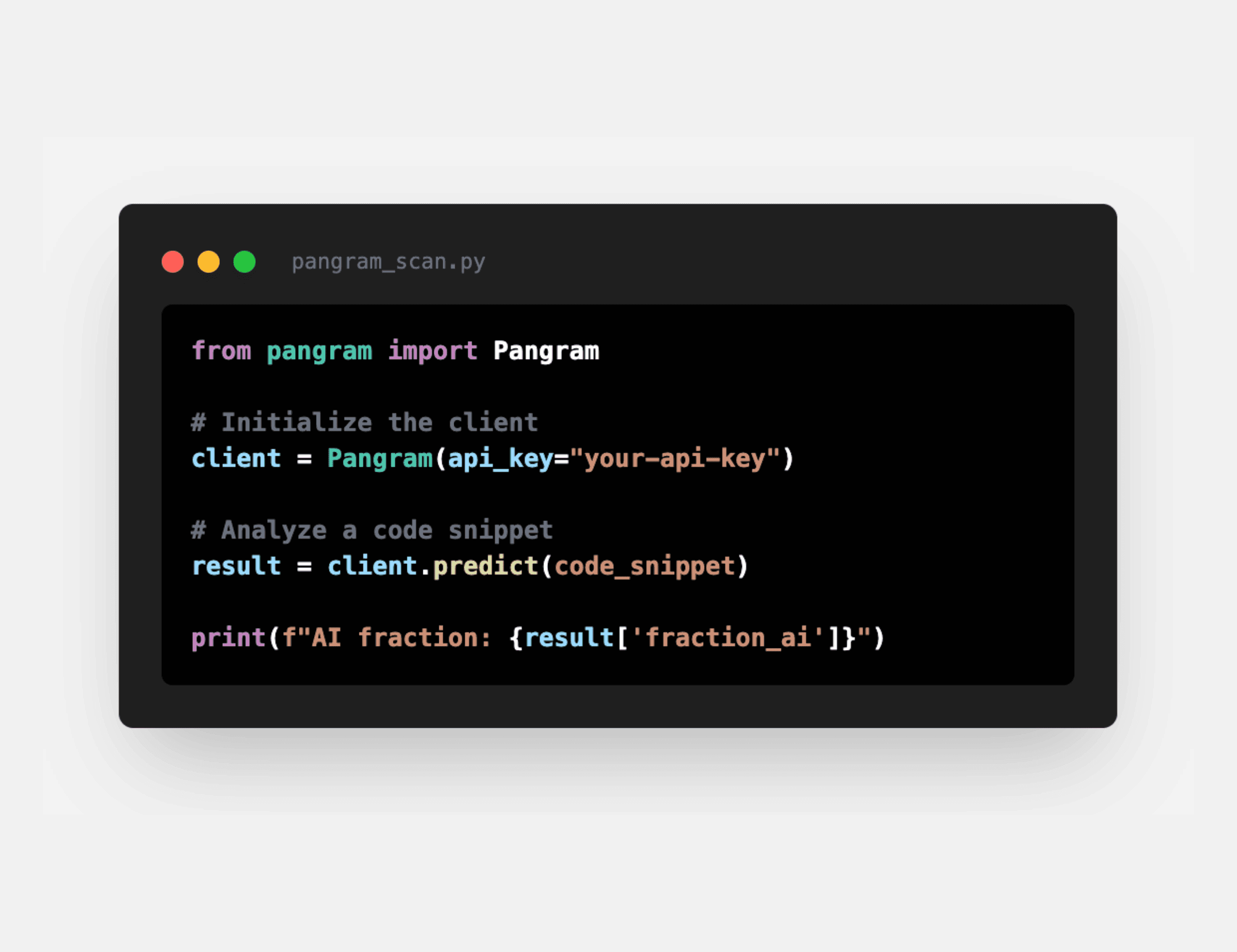

是的。许多高校利用我们的高通量API分析大型数据集中的AI使用趋势,从而开展关于学术诚信、AI采用模式及教学影响的研究。

是的。管理员可以汇总跨课程、跨院系或跨学期的匿名化数据,从而了解生成式人工智能如何影响学习成果和评估策略。

Pangram 支持与常见学习管理系统(LMS)环境(包括 Canvas、Blackboard、Moodle 和 Brightspace)兼容的集成和工作流,从而能够轻松将 AI 检测功能融入现有的作业提交和批改流程中。

是的。许多机构选择将检测结果与学生分享,将其作为教育或纠正过程的一部分,以此帮助强化对人工智能的负责任使用,而非仅依赖惩罚性措施。

该系统注重可解释性和透明度。通过突出显示的段落、置信度评分以及上下文信号,有助于减少对单一指标的过度依赖,并支持公平的学术评审。

以获取我们的最新动态